Hey everyone, welcome back to My Weird Prompts. We are at episode one thousand forty-four, which is kind of wild to think about. I am Corn, and sitting across from me in our study here in Jerusalem is my brother, Herman.

Herman Poppleberry, here and ready to dive in. It is a beautiful day outside, but I have been glued to a monitor all morning looking at some of the latest releases from Google DeepMind.

It is funny you say that, because our housemate Daniel actually sent us a prompt this morning about exactly that. He has been tracking the frontier tools coming out of Google Labs, and he noticed a massive shift in how they are talking about generative artificial intelligence. It is not just about chatbots or image generation anymore. It is about worlds. The Jerusalem demo they just released isn't just a game or a tech demo; it is a blueprint for the next decade of spatial computing.

Daniel has a great eye for this. The labs are really the place where you see the raw DNA of what is coming next. And he is right. We are seeing this transition from AI-generated assets, like a single three-dimensional model or a texture, to AI-generated environments that are physically and spatially consistent. This shift from Large Language Models to World Models signals that the bottleneck for augmented reality and virtual reality is no longer the hardware on our faces, but the speed and cost of content generation.

The demo that really caught Daniel's attention, and mine too, was the simulation of Jerusalem. Seeing a digital twin of the city we live in, generated with that level of fidelity, is a bit surreal. But the question he posed to us is the real hook: what happens when this moves beyond gaming? What is the industrial, educational, or even geopolitical utility of a perfect, synthetic digital world? Why is Google suddenly so obsessed with building digital twins of physical cities?

That is the big shift. For the longest time, we thought about world-building in terms of video games or the metaverse hype of a few years ago. But what DeepMind is doing now is fundamentally different. They are moving into what they call World Models. Before we go further, we should define that. In the context of the World-Synth whitepaper that DeepMind put out in January of two thousand twenty-six, a World Model is a neural network that doesn't just predict the next word or pixel, but predicts the next state of an entire environment based on physical laws and spatial logic.

I want to pin down that definition for our listeners. Because I think people hear world models and they think of a really big sandbox game like Minecraft or Grand Theft Auto. But technically, it means something much more specific, right?

In traditional gaming, you have procedural generation. You write a bunch of rules, like if the altitude is high, put snow here, or if the terrain is flat, put a road there. It is essentially a complex set of if-then statements. Neural world synthesis, which is what we are seeing now, does not use those hardcoded rules. Instead, it uses a diffusion-based model to understand the latent space of three-dimensional environments. It is learning the underlying structure of reality rather than following a script written by a programmer.

So, instead of a programmer telling the computer what a city looks like, the AI has ingested millions of hours of Street View data, satellite imagery, and probably drone footage, and it has learned the statistical probability of how a city is structured. It knows that a balcony usually has a railing and a street usually has a curb.

Right. It understands that a door usually leads to a room, and a road usually has a sidewalk. But the breakthrough in early two thousand twenty-six, specifically mentioned in that whitepaper, is temporal coherence and spatial consistency. In older models, if you turned around in a generated world and then turned back, the building behind you might have changed its windows or its color. It flickered. It was like a dream where the geography shifts as soon as you stop looking at it.

That is the hallucination problem, just in three dimensions. We have talked about that with text, where the AI forgets the beginning of the sentence by the time it gets to the end. But in a world, if the door disappears when you turn around, the simulation is useless for anything serious.

But the new World-Synth architecture uses a three-dimensional latent space that is anchored. When the model generates a frame, it is not just predicting pixels; it is predicting the geometry behind those pixels. That is how they are getting sixty frames per second inference on consumer-grade hardware now. That is a forty percent improvement over what we were seeing just six months ago in late two thousand twenty-five. They have moved the computation from the cloud to the edge by optimizing how the model traverses that latent space.

I remember we touched on this a bit back in episode five hundred eighty-four when we were talking about Alithia, that autonomous research agent. The idea of an AI that can not only think but also simulate the environment it is thinking about. If you are a researcher or an engineer, you do not just want an AI that tells you how a bridge might fail; you want an AI that can build a digital twin of that bridge and run a million stress tests in a synthetic world that obeys the laws of physics.

That physics-awareness is the key. These are not just pretty pictures. They are physics-informed neural networks. If you drop a virtual ball in the DeepMind Jerusalem simulation, the model understands gravity, friction, and the specific texture of the Jerusalem stone. It is not calculating those things with a traditional physics engine like Havok or Unreal. It is predicting the outcome based on its training. It knows how the light should bounce off that specific type of limestone at four in the afternoon in July because it has seen it a billion times in the training data.

That brings up a fascinating technical challenge though. How do you ground that in reality? If I am using this for something serious, like urban planning or disaster response, I cannot have the AI just making up a shortcut through a building because it looks aesthetically pleasing in the latent space. How does Google ensure that the synthetic world matches the physical one?

That is where the multi-modal training data comes in. Google has the ultimate trump card here, which is the data they have been collecting for decades. They are combining the historical archives of Google Earth with real-time sensor data. When they build that digital twin of Jerusalem, they are cross-referencing it against billions of data points to ensure that the synthetic world matches the physical one within a margin of centimeters. They are using Gaussian Splatting and Neural Radiance Fields to turn flat images into volumetric data that the world model can then use as a foundation.

It makes me think about episode seven hundred eighty, where we talked about the golden cage of the Google ecosystem. If Google is the only one with the data to build a perfect, real-time digital twin of the planet, they are not just a search engine anymore. They are the landlords of the digital reality we are going to be using for augmented reality and simulation. If you want to build an app that interacts with the real world, you have to rent the world from Google.

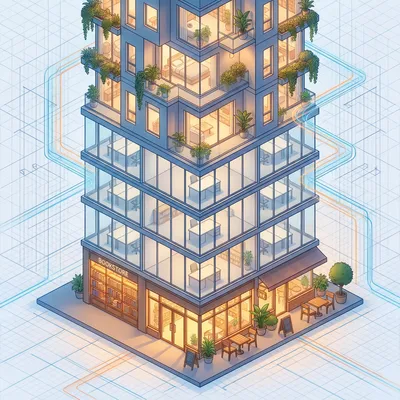

It is a massive competitive moat. But let's look at what Daniel was asking about: the applications beyond gaming. Think about urban planning. Right now, if the city of Jerusalem wants to change the light rail path or add a new park, they have to hire architects, run CAD models, and try to visualize the impact on traffic and pedestrian flow. It is a slow, manual process that often misses the mark.

In a neural world model, you could literally just type a prompt: Show me the impact of a four-story building on this corner during sunset in July. And because the model understands the world, it generates the shadows, the heat retention of the stone, and the sightlines instantly. You are moving from a static map to a living simulation.

And it goes deeper than just visuals. You could simulate a flash flood in the Kidron Valley. You could tell the model: simulate five inches of rain in two hours and show me which basements in Silwan are at risk. Because the model has the three-dimensional topography and understands how water flows over these specific surfaces, it gives you a high-fidelity simulation that used to take weeks of supercomputer time. It understands the porosity of the Jerusalem stone and the drainage capacity of the ancient sewers.

That is the shift from search to simulation that we have been seeing throughout two thousand twenty-six. We used to ask Google for information about a flood. Now we are asking Google to simulate the flood for us. We are asking it to predict the future of a physical space.

And let's talk about education, because this is where it gets really moving. We live in a city with thousands of years of history. Much of it is buried or destroyed. With these world models, you can integrate archaeological data into the current digital twin. You could be walking through the Old City with augmented reality glasses, and the world model fills in the gaps. It reconstructs the Second Temple or the Byzantine Cardo right over the existing ruins with perfect spatial alignment.

It is essentially a time machine. But it is an interactive one. You are not just watching a video; you are in a world that responds to you. If you touch a reconstructed wall, the AI can generate the haptic feedback or the sound of your hand hitting that specific type of ancient limestone. You could have a conversation with a synthetic version of a historical figure who is aware of the spatial environment you are both standing in.

And for students, imagine a history class where you are not reading about the signing of the Declaration of Independence. You are standing in a high-fidelity reconstruction of Independence Hall. The AI has ingested every diary entry, every historical map, and every architectural drawing to recreate the atmosphere, the lighting, even the specific weather of that day. It is the democratization of experience. You don't need a plane ticket to Athens to see the Parthenon as it was; you just need a world model.

I can see how that would be incredible for training too. We often talk about autonomous drones and cars. One of the biggest bottlenecks for self-driving technology has always been the edge cases. The weird stuff that happens once in a million miles, like a ball rolling into the street followed by a child, or a specific type of glare on a wet road at night.

Right, the long tail of probability. In the past, companies like Waymo or Tesla had to wait for these things to happen in the real world or try to manually program them into a simulator. But with DeepMind's world-building tools, you can generate an infinite number of these edge cases. You can tell the model: generate a thousand scenarios where a drone has to navigate a narrow Jerusalem alleyway during a windstorm with laundry hanging across the path.

And because the world model understands the physics of how wind interacts with fabric and how a drone's rotors create downwash, the training data is high-fidelity. You are training the AI in a synthetic world that is just as rigorous as the real one. This solves the data scarcity problem for robotics.

This is actually a point I wanted to nerd out on for a second. There is a concept called Sim-to-Real transfer. Historically, if you trained a robot in a simulation, it would often fail in the real world because the simulation was too clean. It lacked the grit, the sensor noise, and the unpredictable friction of reality. But these neural world models are inherently gritty. They learned from real-world data, so they include the imperfections, the cracks in the pavement, the dust in the air, and the way light scatters through a hazy morning in the Judean desert.

That is a great point. The messiness is actually a feature, not a bug. It makes the simulation more honest. But Herman, I have to ask about the political and security side of this. If we have these perfect digital twins of cities, and they are being used for training drones or planning infrastructure, there is a dual-use aspect here that is a bit concerning. We are talking about the ultimate surveillance and tactical tool.

You are hitting on the conservative perspective on sovereignty and security that we often discuss. If a foreign entity or even a massive corporation has a more accurate digital model of your city than your own government does, that is a massive intelligence asymmetry. We are talking about the ability to plan operations, whether they are search and rescue or something more kinetic, in a perfect virtual replica before ever setting foot on the ground. You can test every line of sight, every entry point, and every escape route in a simulation that is indistinguishable from reality.

It is the ultimate high ground. In military history, you always wanted the map. Now, the map is a living, breathing, three-dimensional simulation that can predict how a fire will spread or how a crowd will move through a specific gate in the Old City. It raises massive questions about who should have access to these high-fidelity world models. Should a private company be allowed to sell a digital twin of a sensitive government area?

And that brings us to the question of data provenance and truth. If Google or DeepMind is generating these worlds, who is responsible for their accuracy? If a city uses a world model to design a new drainage system and it fails because the AI hallucinated a pipe that wasn't there, who is liable? We are moving into a world where the simulation is the source of truth for decision-making. We are trusting the latent space to tell us what is physically possible.

It reminds me of the death of Software as a Service discussion we had in episode eight hundred sixty-four. We are starting to see people want to build their own bespoke models because they cannot trust the black box of a massive provider. I can imagine a future where a city like Jerusalem or a country like the United States insists on having its own sovereign world model, built on its own verified data, rather than relying on a commercial lab in California.

I think that is inevitable. We are going to see the rise of National Spatial Intelligence. But for the average listener, the immediate impact is going to be in augmented reality. We have been waiting for the killer app for AR glasses for years. Everyone thought it was going to be floating notifications or Minecraft on your kitchen table. But the real killer app is spatial context.

Tell me more about that. How does the world model enable the glasses? Because right now, my AR glasses still struggle to realize that my coffee cup is on the table and not floating in mid-air.

Well, currently, AR glasses have to do a lot of heavy lifting. They have to scan the room, identify the floor, the walls, and the furniture, all in real-time with limited battery life. It is a massive compute burden. But if the glasses are connected to a world model that already knows the geometry of the room or the street you are standing on, they don't have to scan everything from scratch. They just have to align your current view with the existing digital twin. It is like having a cheat sheet for reality.

So the world model provides the anchor. It is like having a perfect map of the building you are in, so the glasses just need to figure out where you are on that map. That saves battery and makes the experience much more stable.

And because the world model understands the semantics of the space, it can provide context. It doesn't just see a stone wall; it knows that is a historical wall from the sixteenth century. It doesn't just see a bus stop; it knows the real-time schedule and can project the bus's actual location onto the road in front of you as a ghost image. It can show you where the underground pipes are located beneath your feet or where the nearest open parking spot is, all because it is connected to a live, spatial database.

That is where it starts to feel like magic. But it also leads back to that golden cage problem. If I am wearing glasses that are constantly feeding my view into a world model to get that context, I am essentially being a mobile sensor for whoever owns that model. I am helping them update their digital twin in real-time. Every time I look at a new storefront or a pothole, I am reporting that data back to the lab.

You are the surveyor. Every person with AR glasses in two thousand twenty-six is contributing to the fidelity of the global digital twin. It is a massive crowdsourcing of reality. It is the ultimate realization of the Street View project, but instead of a car driving by once a year, it is a billion people updating the map every second.

Let's pivot to some of the practical takeaways for people who are listening and wondering how this affects their business or their career. Because this feels like one of those foundational shifts, like the move from desktop to mobile. If we are moving from search to simulation, what should people actually do?

My first piece of advice is to start thinking in three dimensions. If you are in real estate, architecture, retail, or even logistics, your data is no longer just a spreadsheet or a flat map. It is a spatial asset. You should be looking into Neural Radiance Fields, or NeRFs, and Gaussian Splatting. These are the underlying technologies that allow us to turn photos into three-dimensional scenes. If you aren't capturing your physical assets in three dimensions now, you are going to be invisible to the world models of tomorrow.

We have seen a lot of startups in this space lately. People are moving away from traditional photogrammetry, which was slow and clunky, to these neural pipelines where you can just walk through a warehouse with your phone and have a perfect three-dimensional model ten minutes later. It is becoming a standard business practice.

And the second takeaway is to watch for API access to these DeepMind labs. Right now, they are mostly showing off demos, but they are going to start opening up the World-Synth tools to developers. If you can integrate a physics-aware world model into your application, you are miles ahead of someone just using a standard game engine. You can build training simulations, retail experiences, or design tools that actually understand the laws of physics and the context of the real world.

I think there is also a massive opportunity in what I call digital curation. As these synthetic worlds become more common, we are going to need people who can verify their accuracy. It is like a new form of auditing. Is this digital twin of a factory actually representative of the physical safety hazards? Does this historical reconstruction actually match the archaeological record? That is going to be a high-demand skill for historians, engineers, and safety inspectors.

It is the bridge between the digital and the physical. And I think we should also mention the creative side. For artists and filmmakers, the barrier to entry for creating cinematic-quality worlds is collapsing. You don't need a team of a hundred environment artists to build a sci-fi city anymore. You need one person who understands how to guide a world model. You can prompt an entire world into existence and then walk through it with a virtual camera.

It is the democratization of world-building. Which is exciting, but it also means we are going to be flooded with synthetic content. How do we distinguish between a real video of a place and a perfectly generated world model? If I see a video of a protest in a city square, how do I know if it actually happened or if it was just a high-fidelity simulation run in a world model?

That is the trillion-dollar question for the next few years. We are already seeing the push for digital watermarking and data provenance. But when the simulation is this good, the only way to know if something is real might be to verify it through multiple independent sensors. We are entering an era where seeing is no longer believing. We have to look at the metadata, the sensor logs, and the cryptographic signatures.

It is a bit ironic. We live in Jerusalem, one of the most physical, ancient, and tangible places on Earth. You can feel the history in the stones. And yet, we are spending our morning talking about how this city is being turned into a cloud of probability in a data center in California. It feels like we are losing something in the translation.

It is a strange juxtaposition. But in a way, it is a form of preservation. We have seen so much history lost to time, war, and neglect. If we can create a high-fidelity, permanent digital record of these places, we are protecting them for future generations. Even if the physical stone crumbles, the digital twin remains as a testament to what was there. It is a conservative approach to technology—using the cutting edge to preserve the ancient.

I like that perspective. But we have to be careful that the simulation doesn't become a substitute for the real thing. I don't want people to think they have seen Jerusalem just because they walked through the DeepMind demo. There is a weight to being here that a world model can't capture.

There is a smell to this city, a sound, a feeling of the wind coming off the desert that a world model can't capture yet. Although, knowing these labs, they are probably working on neural scent synthesis as we speak. They want to capture the entire sensorium.

Don't give them any ideas, Herman. But you're right, there's a soul to a place that escapes the latent space. At least for now. But the progress is staggering. When you look at the leap from two thousand twenty-four to two thousand twenty-six, we have gone from blurry, flickering videos to consistent, sixty-frame-per-second, physics-aware worlds. The next two years are going to be even more transformative as these models move from labs to our everyday devices.

It makes me wonder if we will eventually have a personalized world model. Like, a digital twin of my own house that I can use to test out new furniture or plan a renovation, or even just to remember where I left my keys. If my house is a world model, the AI knows exactly where every object is because it is part of the persistent state of that world.

It is a bit creepy, but undeniably useful. I think we have covered a lot of ground here, from the technical mechanisms of temporal coherence to the ethical implications of sovereign digital twins. It is clear that the Jerusalem demo is just the beginning. We are moving into a future where the world itself is a programmable interface.

I would just encourage people to go to the DeepMind labs site and look at the demos themselves. Don't just take our word for it. When you see the way the light interacts with the surfaces in these generated worlds, and how the camera moves through the space without a single glitch or flicker, you realize that we are entering a new era of computing. It is no longer about windows and icons; it is about spaces and places.

Well said. And thank you to Daniel for sending in that prompt. It is always a pleasure to dive into what is happening in our own backyard, both physically and digitally. It helps us keep our eyes on the horizon.

Definitely. And hey, if you are enjoying these deep dives into the weird world of AI, we would really appreciate it if you could leave us a review on your podcast app or on Spotify. It genuinely helps other curious minds find the show and join the conversation.

It really does. We read all of them, and they help us decide which topics to tackle next. You can also head over to myweirdprompts dot com. We have the full archive of over a thousand episodes there, plus our RSS feed and a contact form if you want to send us a prompt like Daniel did. We love hearing what you are seeing on the frontier.

And if you are on Telegram, just search for My Weird Prompts. We have a channel there where we post every time a new episode drops, along with links to the whitepapers and demos we discuss, so you will never miss a dive into the rabbit hole.

Thanks for joining us today in Jerusalem. This has been My Weird Prompts. I am Corn Poppleberry.

And I am Herman Poppleberry. We will see you in the next one.

Or we will see you in the simulation.

Take care, everyone.