The next time you ask an LLM a question, you're not just paying for a few cents of inference. You're paying down a foundational training debt that costs more than the GDP of a small country. Today, we’re itemizing the bill for the most expensive autocomplete engine in human history.

Herman Poppleberry here. And that is an excellent way to frame it, Corn. We are talking about the pretraining phase—the absolute "Stage Zero" of artificial intelligence. As of April twenty twenty-six, the price tag for a single frontier model run has reportedly crossed the five hundred million dollar mark. That is a staggering barrier to entry.

Five hundred million. You could buy a fleet of private jets or, apparently, one very smart file of weights and biases. So, Daniel sent us this prompt today about the "Pretraining Bill." He wants us to break down the astronomical compute, the petabytes of data, and the sheer financial madness required to create a base model. And specifically, he wants us to distinguish this raw engine from the polite chatbots we actually interact with. By the way, speaking of frontier models, today’s episode is actually powered by Google Gemini three Flash.

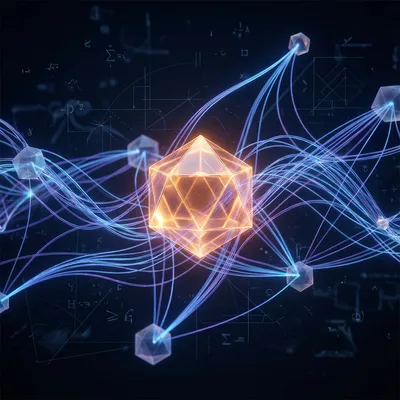

It’s poetic, isn’t it? Using the output of a massive pretraining run to discuss the cost of pretraining. But to understand why it costs half a billion dollars, we have to start with what pretraining actually is. At its core, it’s just one task: next-token prediction. You feed a model a massive, unstructured corpus of text—the entire internet, basically—and tell it, "Predict the next word." Over and over, trillions of times.

It sounds so simple when you put it like that. It’s like a toddler learning to speak by eavesdropping on every conversation on Earth simultaneously. But there’s a massive difference between that "base model" and the assistant that helps me write emails, right?

A massive difference. This is the core dichotomy our listeners need to grasp: pretraining versus post-training. Pretraining creates the "Base Model." This is a raw, autocomplete-style engine. It understands the structure of language, it knows facts about the world, it can code, and it can reason to some extent. But it has no manners. It doesn't know it’s an assistant. If you ask a base model, "What is the capital of France?", it might not answer you. It might respond with, "What is the capital of Germany?" because it thinks it’s completing a list of geography quiz questions it saw during training.

Right, because in its "mind," if the previous text was a question, the most likely next text is another question, not necessarily an answer. It’s just trying to match the statistical patterns of the data it was fed. It’s essentially a mirror of the internet’s collective output.

That’s it. Post-training is what happens next. That’s where you use techniques like Supervised Fine-Tuning, or SFT, and Reinforcement Learning from Human Feedback, known as RLHF. That’s the "finishing school" where you teach the model to be helpful, harmless, and honest. But the bill we’re looking at today? That’s almost entirely in the pretraining phase. Ninety-nine percent of the compute budget is spent before the model even knows how to say "Hello, I am an AI assistant."

Okay, let's look at the first line item on this bill: The Compute. When people say "thousands of GPUs," what does that actually look like in practice? Because I’m picturing a very loud, very hot room that could probably be seen from space.

You’re not far off. For a state-of-the-art model in twenty twenty-six, we’re talking about clusters of twenty-four thousand to one hundred thousand NVIDIA H100s or B200s. These aren't just plugged into a wall; they are interconnected with specialized high-speed networking like InfiniBand because the GPUs have to talk to each other constantly. If one GPU misses a beat, the whole training run can stall.

And these runs aren't just a weekend project. How long are we talking?

Usually three to six months of continuous, one hundred percent utilization. Imagine running a marathon at a full sprint for half a year without stopping. The reliability engineering involved is mind-blowing. Meta’s technical reports on Llama three mentioned that at this scale, hardware failures happen every few hours. A cable snaps, a memory chip fries, a power supply blips. They had to build automated systems just to "checkpoint" the model—saving its progress—so they could restart from where they left off after a crash.

It’s like playing a video game where you can only save every four hours, but the console explodes once a day. The stress on those engineers must be incredible. You’re sitting there watching a fifty-million-dollar electricity bill tick up, praying the cluster doesn't melt.

And the energy consumption is the silent killer on the bill. A single frontier training run can consume as much electricity as three thousand homes use in an entire year. We are seeing companies like xAI and Oracle building "gigascale" data centers now where the primary bottleneck isn't the software or even the chips—it's whether the local power grid can actually deliver enough juice to the building without causing a blackout.

Which explains why all these AI companies are suddenly getting into nuclear power and buying up land next to hydroelectric dams. They aren't just tech companies anymore; they’re industrial power consumers. But okay, you’ve got the chips, you’ve got the power. Now you need the "fuel." Let’s talk about the Data Bill. We’re not just talking about a few Wikipedia articles here.

No, the scale has shifted dramatically. A few years ago, GPT-three was trained on about three hundred billion tokens. Today, Llama three point one and other frontier models are being trained on fifteen trillion tokens or more. To give you a sense of scale, a "token" is roughly three-quarters of a word. Fifteen trillion tokens is essentially everything ever written by humans that has been digitized and put on the public web.

Is it just a giant vacuum cleaner? Like, "if it’s on the internet, it’s in the model"?

That was the early approach—just grab everything from Common Crawl, which is this massive, raw scrape of the web. But "raw" internet is mostly garbage. It’s spam, it’s gibberish, it’s toxic comments, it’s duplicate SEO bait. The real "Data Bill" isn't just the storage; it’s the cleaning. This is a massive engineering effort. You have to run deduplication to make sure the model doesn't see the same Reddit thread ten thousand times. You have to filter for quality.

I remember reading about FineWeb-Edu from Hugging Face. That was a big deal, right?

Huge. They used AI classifiers to look at the raw web and say, "Is this page educational? Does it have high signal-to-noise ratio?" By throwing away ninety percent of the junk and keeping only the high-quality stuff, they found they could train models that were much "smarter" with less data. But developing those filters is itself a massive compute task. You’re essentially using a small AI to decide what the big AI gets to eat.

It’s like a digital kitchen. You have to wash the vegetables, peel them, and throw away the rot before you can even start cooking the soup. And if you mess up that "data mix"—if you put in too much Python code and not enough French poetry—the model’s "personality" is baked in forever. You can’t easily "un-learn" a bad data diet during post-training.

And that brings us to the actual dollar amount. In twenty twenty-three, people estimated GPT-four cost maybe one hundred million dollars to pretrain. Today, the stakes have quintupled. If you factor in the cost of the GPU cluster—which can cost billions to build—the electricity, the specialized data sets, and the salaries of the PhDs who know how to keep a hundred thousand GPUs from catching fire, you are looking at a total R&D bill that exceeds a billion dollars for a single model family.

That is the definition of a moat. I mean, I have some decent savings, Herman, but I’m a few hundred million short of starting "Corn-AI." This effectively means that only, what, five or six organizations on the planet can play this game?

It’s a very short list. Microsoft and OpenAI, Google, Meta, Anthropic, and maybe xAI. Then you have the Chinese state-backed firms. That’s it. If you’re a startup today, you are almost certainly not pretraining a frontier-level model from scratch. You are either fine-tuning someone else’s model or you’re building on top of an open-weight base model like Llama. The "Compute Divide" is real. It’s becoming a game of whoever has the most capital and the best relationship with the power company.

It’s fascinating because it changes the whole "garage startup" myth of Silicon Valley. You can’t build a frontier LLM in a garage because the garage doesn't have a dedicated substation and a hundred-million-dollar credit line with NVIDIA. But let's look at what actually comes out the other side of this months-long, multi-million dollar process. You have a "Base Model." If I were to interact with a raw base model right now, how would it feel compared to the ChatGPT I’m used to?

It feels like a brilliant, chaotic ghost. If you give it a prompt like, "The best way to cook a steak is..." it will do an amazing job. It will give you a list of instructions that sound like a professional chef. But if you ask it, "Can you help me cook a steak?", it might respond with, "Can you help me bake a cake? Can you help me roast a chicken?" It thinks you’re writing a children’s book about helpful animals.

So it’s a pure mimic. It doesn't have a sense of "self" or a "mission" to be an assistant. It’s just completing the pattern.

Right. And here’s the kicker: some researchers argue that the base model is actually more capable than the chatbot version. When we do post-training—the RLHF and the safety tuning—we are essentially "lobotomizing" parts of the model. We’re telling it, "Never say this, always respond in this specific tone, don't be creative in these ways." It makes the model safe for a corporate environment, but it often reduces its raw problem-solving edge or its "out of the box" thinking.

That’s wild. So we spend five hundred million dollars to build a super-intelligence, and then we spend another few million to make it act like a polite, slightly boring intern.

It’s a necessary trade-off for usability. But for developers, understanding that base model is crucial. If you're building a specialized tool, sometimes you want to start with the base model and do your own fine-tuning because you don't want the "preachy" behavior that comes with standard RLHF.

Okay, let's dive into that post-training stack for a second, just so we can really see the contrast. Daniel mentioned SFT, RLHF, and DPO. These are the tools that take our "chaotic ghost" and give it a name tag and a job description. How do they work, and why are they so much cheaper than the pretraining?

They’re cheaper because they work on much smaller datasets. While pretraining uses trillions of tokens of "dirty" web data, SFT—Supervised Fine-Tuning—uses maybe ten thousand to one hundred thousand "gold" examples. These are high-quality conversations written by humans. "User asks X, Assistant responds with Y." This teaches the model the format of being a chatbot. It’s like teaching a genius who has read every book in the world how to actually answer a phone call.

And then RLHF is the "ranking" phase?

Reinforcement Learning from Human Feedback. You show the model two different answers to the same question and have a human say, "A is better than B." The model then updates its internal "reward function" to prefer the style of answer A. It’s how we train it to not be rude, to be concise, and to follow safety guidelines.

And you mentioned DPO—Direct Preference Optimization. Is that the new hotness?

It is. It’s a 2024-2025 breakthrough that basically skips the complicated step of building a separate "reward model." It just uses math to directly optimize the model based on those human preferences. It’s faster, it’s more stable, and it’s why we’re seeing such a rapid explosion of high-quality "Instruct" models. A small team can take a base model and do DPO for ten thousand dollars and a few days of compute. That’s why you see so many variants of Llama on Hugging Face. The "polishing" is cheap. The "quarrying of the stone" is what costs half a billion.

I love that metaphor. Pretraining is quarrying a massive block of marble from the mountain. Post-training is the sculptor coming in with a chisel to make it look like a person. You can't sculpt if you don't have the marble, and only a few companies own the mountain.

And the mountain is getting harder to mine. We should talk about the "Data Wall." This is a big topic in twenty twenty-six. We have basically used up the high-quality human text on the internet. We’ve scraped the books, the code, the papers. So now, companies are turning to "Synthetic Data"—using AI models to generate data to train the next generation of AI models.

That sounds like a recipe for digital inbreeding. If a model learns from its own mistakes, doesn't it just get weirder and weirder until it collapses?

That’s the fear—"Model Collapse." If the synthetic data isn't carefully curated, the model loses the nuance of human language. It starts repeating its own quirks. But, interestingly, if you use a stronger model to generate data for a smaller model—like using GPT-four to train a small coding model—it actually works incredibly well. It’s like a teacher writing a textbook for a student. The "Data Bill" is shifting from "pay for scrapes" to "pay for high-quality synthetic generation."

It’s an interesting pivot. So the moat isn't just about having the most data anymore; it’s about having the best "teacher" models to create the next generation of data. It’s a self-reinforcing cycle. If you have the best model now, you can use it to build the best training set for your next model, widening the gap between you and the competition.

And that’s why the engineering talent is such a huge part of the cost. You need people who understand the "alchemy" of the data mix. There was a recent case study on a one point eight trillion parameter model where the researchers found that adding just a tiny percentage of high-quality textbook data late in the pretraining run had a massive impact on the model’s reasoning abilities. It’s not just about quantity; it’s about the timing and the blend.

It really does feel like digital alchemy. You’re mixing these massive vats of data and compute, hoping the "philosopher's stone" comes out at the end. But what happens if the run fails? If you spend three months and fifty million dollars in electricity and the model comes out... stupid?

It happens. More often than these companies like to admit. Sometimes a model develops a "mode collapse" where it just starts repeating the word "the" over and over. Sometimes the loss curve—the mathematical measure of how well it’s learning—just flatlines for no obvious reason. That’s why these labs run "miniature" versions of the training first. They’ll train a tiny seven-billion parameter model on a slice of the data to predict how the one-trillion parameter model will behave. It’s called "scaling laws."

So they’re basically doing wind-tunnel testing before they build the actual jet.

Precisely. If your "scaling law" prediction is off, you might waste a hundred million dollars. This is why guys like Sam Altman and Dario Amodei are so obsessed with compute. They’re betting that the scaling laws will keep holding—that if they just keep paying a bigger "bill," the models will keep getting smarter.

But at some point, you hit a physical limit, right? Not just the money, but the literal atoms. We’re already talking about data centers that need their own dedicated power plants.

We are. We’re seeing a shift toward more efficient architectures. You mentioned state-space models and Mamba in your notes, Corn. There’s a lot of research into "sparse" models—like Mixture of Experts—where only a fraction of the model "fires" for any given prompt. That’s how GPT-four and Llama three point one 405B manage to be so large but still relatively fast. They aren't using all their "brain" at once.

It’s like a giant library where only the librarians relevant to your specific question get up from their desks. It saves a ton of energy, but the "bill" to build the library in the first place is still there. You still have to pretrain all those "experts."

Right. The "bill" for the initial training doesn't go away. In fact, for Mixture of Experts, the pretraining can be even trickier because you have to balance the experts so that one doesn't become "too smart" while the others stay "lazy." It’s a massive load-balancing problem.

So, looking at this from a business or developer perspective—what are the actual takeaways? If I’m a CTO sitting on a pile of cash, do I try to build my own mountain, or do I just rent a room on someone else’s?

For ninety-nine point nine percent of businesses, you rent. The takeaway is: don't confuse a base model with a product. If you're using an API, you need to know if you're hitting the "Base" endpoint or the "Instruct" endpoint. If you want a chatbot, use Instruct. If you want to build a very specialized tool—say, something that translates legal jargon into plain English—you might actually get better results by taking a base model and doing your own SFT on a few thousand high-quality legal documents.

And for the average person listening, the takeaway is probably just a bit of healthy respect for the sheer industrial scale of what’s happening behind that little blinking cursor. When you ask it to write a poem about your cat, you are utilizing an asset that cost more than a skyscraper.

It’s the centralization of intelligence. We used to think the internet was this decentralized, democratic thing. But the "engines" of the internet—the frontier LLMs—are becoming some of the most centralized assets in history. Only a few entities can afford the "bill." That has huge implications for who gets to set the safety rules, who gets to define "truth" for these models, and who gets access to the most powerful tools.

It’s a high-stakes game. And I wonder if the "bill" will ever actually go down. We’ve seen this in other technologies—the first computers cost millions and filled rooms, now we have them in our pockets. Will we ever see "frontier" pretraining become affordable for a mid-sized company?

It’s a tug-of-war. On one side, we have better algorithms and more efficient chips. On the other side, the "frontier" keeps moving. As soon as it becomes cheap to train a GPT-four level model, the big labs move on to GPT-five or six, which costs ten times more. We might be in a permanent state where the "latest and greatest" is always priced at the limit of what a multi-billion dollar corporation can afford.

So the moat isn't a fixed wall; it's a moving target. You have to keep running just to stay in the same place relative to the frontier.

That’s the reality of the AI arms race in twenty twenty-six. And it’s why open-source—or "open-weight"—models are so important. When Meta spends five hundred million dollars to train Llama and then releases the weights, they are essentially giving a five-hundred-million-dollar gift to the developer community. They’re subsidizing the "Data Bill" and the "Compute Bill" for everyone else.

Although, let's be honest, they’re doing it because it hurts their competitors and builds an ecosystem around their hardware standards. It’s "generous" with a side of "calculated strategy."

Oh, absolutely. There are no charities in the frontier AI space. But regardless of the motivation, the result is that we get to play with these "base models" without having to pay the "bill" ourselves.

Well, I for one am glad I'm not the one trying to explain a fifty-million-dollar electricity bill to a board of directors. I’ll stick to the cheeky observations and let the PhDs worry about the GPU failure rates.

And I’ll stay here reading the technical reports on how they fixed those failure rates. It’s a win-win.

Before we wrap this up, there are a few practical things for people to keep in mind. If you're a dev, go play with the base models. OpenAI and others often have "base" versions available through their APIs. Try a prompt on a base model versus the chat version. It’s a great way to see the "raw intelligence" before the filters are applied. You’ll see why people call them "stochastic parrots," but you’ll also see the moments of genuine, weird brilliance that often get polished away in the assistant versions.

And for the business leaders—invest in your data. The "bill" for pretraining is high because data is the fuel. Even if you're just fine-tuning a model, the quality of your internal data will be the differentiator. A mediocre model with perfect data will often outperform a frontier model with messy, irrelevant data for a specific task.

Good point. The "Data Kitchen" is still the most important room in the house. We actually talked about that back in episode eighteen thirty-nine, "AI's Data Kitchen," if you want to hear more about the "hoovering" side of things.

I think we’ve itemized the bill pretty thoroughly. Compute, data, power, and the "ghost in the machine" base model that comes out the other end. It’s a wild era to be watching this stuff.

It really is. And that's our look at the staggering cost of birth for an LLM. Thanks as always to our producer Hilbert Flumingtop for keeping the lights on—at a much lower cost than a GPU cluster, fortunately.

And a big thanks to Modal for providing the GPU credits that power this show. They make the "compute bill" a lot easier for us to handle.

This has been My Weird Prompts. If you're enjoying these deep dives into the guts of AI, we’d love it if you left us a review on Apple Podcasts or Spotify. It’s the best way to help other curious humans—and maybe a few curious bots—find the show.

Find us at myweirdprompts dot com for the full archive and all the ways to subscribe.

Catch you in the next one.

See ya.