Page 32 of 138

#2202: April Twenty-First: Israel's Ceasefire Collapse Moment

As Iran's ceasefire with Israel expires on Yom Hazikaron, the IDF signals maximum readiness through deliberate leaks while Netanyahu hints at "othe...

#2201: The UK's Impossible Choice in Trump's Iran War

Britain is caught between US military demands and European diplomatic norms—and the fracture could reshape the transatlantic alliance for a generat...

#2200: Reading the Geopolitical Forecast in Oil Prices

When markets spike on breaking news, which price signals actually tell you what traders believe will happen next—and which ones are already priced in?

#2199: Mining the Strait: Why Clearing Iran's Weapons Takes Months

The US is conducting one of the most technically complex military operations in decades—clearing Iranian mines from the world's most critical oil c...

#2198: The Strait Choke: How Naval Blockades Actually Work

The US just announced a blockade of Iranian ports. We break down the legal definition, four centuries of blockade history, and why this one might—o...

#2197: Who Controls the Press Pool?

How the traveling press pool evolved from FDR's train to Air Force One—and what happens when governments decide who gets to cover them.

#2196: The Annotation Economy: Who Labels AI's Training Data

Annotation is the invisible foundation of AI—and a $17B industry by 2030. Here's what dataset curators actually need to know about the tools, platf...

#2195: Nash's Real Genius (And Why the Movie Got It Wrong)

The bar scene in A Beautiful Mind is mathematically wrong—and it obscures Nash's actual breakthrough. We trace the real ideas from his 1950 papers ...

#2194: Game Theory for Multi-Agent AI: Design Better, Fail Less

Nash equilibrium, mechanism design, and why your AI agents are playing prisoner's dilemma whether you know it or not.

#2193: Running Claude in Your Apartment (The Physics Says No)

Building a local AI inference server to rival Claude Code sounds great until you do the math on heat, noise, and neighbor relations.

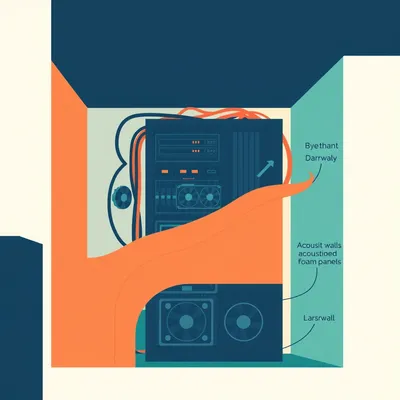

#2192: How We Built a Podcast Pipeline

Hilbert reveals the complete technical architecture behind 2,000+ episodes—from voice memos to GPU-powered TTS, with Claude models, LangGraph workf...

#2191: Making Multi-Agent AI Actually Work

Research from Google DeepMind, Stanford, and Anthropic reveals most multi-agent systems waste tokens and amplify errors. Single agents with better ...

#2190: Simulating Extreme Decisions With LLMs

LLMs fail at the exact problem wargaming was built to solve—simulating irrational, extreme decision-makers. A new study reveals why.

#2189: Scaling Multi-Agent Systems: The 45% Threshold

A landmark Google DeepMind study reveals that adding more AI agents often degrades performance, wastes tokens, and amplifies errors—unless your sin...

#2188: Is Emergence Real or Just Bad Metrics?

The debate over whether AI models exhibit genuine emergent abilities or just appear to because of how we measure them—and why it matters for safety...

#2187: Why Claude Writes Like a Person (and Gemini Doesn't)

Claude produces prose that sounds human. Gemini reads like Wikipedia. The difference isn't capability—it's how they were trained to think about wri...

#2186: The AI Persona Fidelity Challenge

Advanced LLMs dominate benchmarks but fail at staying in character—especially when asked to play morally complex or antagonistic roles. What does t...

#2185: Taking AI Agents From Demo to Production

Sixty-two percent of companies are experimenting with AI agents, but only 23% are scaling them—and 40% of projects will be canceled by 2027. The ga...

#2184: The Economics of Running AI Agents

Production AI agents can cost $500K/month before optimization. Learn model routing, prompt caching, and token budgeting to cut costs 40-85% without...

#2183: Making Voice Agents Feel Natural

Turn-taking, interruptions, and latency are destroying voice AI UX—and the fixes are deeply technical. Here's what's actually happening underneath.