AI

Artificial intelligence, machine learning, and everything LLM

#2178: How to Actually Evaluate AI Agents

Frontier models score 80% on one agent benchmark and 45% on another. The difference isn't the model—it's contamination, scaffolding, and how the te...

#2177: Skip Fine-Tuning: Shape LLMs With Alignment Alone

Can you build a personalized LLM by skipping traditional fine-tuning and using only post-training alignment methods like DPO and GRPO? We break dow...

#2175: Let Your AI Argue With Itself

What happens when you let multiple AI personas debate each other instead of asking one model one question? A deep dive into synthetic perspective e...

#2174: Role-Playing as Orchestration

How a role-playing protocol from NeurIPS 2023 became one of AI's most underrated agent frameworks—and what happens when you scale it to a million a...

#2173: Inside MiroFish's Agent Simulation Architecture

MiroFish generates thousands of AI agents with distinct personalities to predict social dynamics. But research reveals a critical flaw: LLM agents ...

#2172: Council of Models: How Karpathy Built AI Peer Review

Andrej Karpathy's llm-council uses anonymized peer review to make language models evaluate each other fairly—but can it really suppress model bias?

#2171: How IQT Labs Built a Wargaming LLM (Then Archived It)

A deep code review of Snowglobe, IQT Labs' open-source LLM wargaming system that ran real national security simulations before being archived. What...

#2170: Pricing Agentic AI When Nothing's Predictable

How do you charge fixed prices for systems that operate in fundamental uncertainty? Consultants are discovering frameworks that work—but they requi...

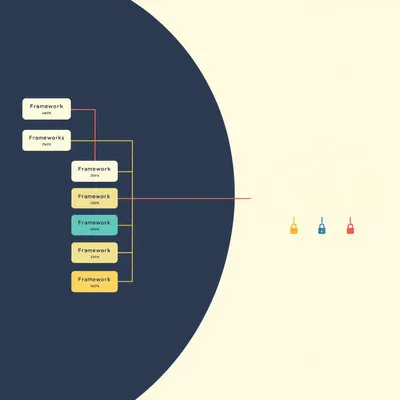

#2169: How Enterprises Are Rethinking Agent Frameworks

Twelve major agentic AI frameworks exist—yet many serious developers avoid them entirely. What patterns emerge in real enterprise adoption?

#2168: What Serious Agentic AI Developers Actually Need to Know

Python, TypeScript, LangGraph, and the frameworks reshaping how agents work. A technical map of the skills and concepts that separate prototypes fr...

#2167: Sync vs. Async: Architecting Agents for Scale

Why most enterprise AI agents fail in production has less to do with models and more to do with whether they're built synchronously or asynchronously.

#2166: Code vs. Canvas: How Developers Pick Their Tools

LangGraph or Flowise? The honest answer isn't obvious. Developers gain speed and integrations with visual builders—but lose version control, testin...

#2165: Strip Your Agent to Bash

The frameworks matter less than you think. What separates a working agent from a failing one is the harness—the orchestration, memory, and tool des...

#2164: Why Bigger Context Windows Don't Fix Attention

Frontier models have million-token context windows, but attention degrades well before you hit the limit. New research reveals why bigger isn't bet...

#2163: Designing Autonomy Boundaries for AI Agents

Production data reveals a surprising truth: fully autonomous AI agents waste 98% of their context window on tool descriptions. Here's why the indus...

#2162: When Knowledge Work Stops Being Safe

The knowledge economy promised safety from automation. Then AI arrived. Here's how we got here—and why the disruption this time is different.

#2160: Claude's Latency Profile and SLA Guarantees

Claude is measurably slower than competitors—and Anthropic's SLA promises are even thinner than the latency numbers suggest. What enterprises actua...

#2158: Claude Managed Agents: Brain Versus Hands

Anthropic's new Managed Agents service runs your agent loop on their infrastructure. Here's what you gain, what you lose, and who it's actually for.

#2155: Public Affairs vs. Lobbying: Shaping the Battlefield

Lobbying is just one tool. Public affairs shapes the entire regulatory battlefield—from AI laws to supply chains.

#2153: How Lobbying Actually Works in DC

Federal lobbying hit $6B in 2025. Here’s what a lobbyist actually does all day—and why the system regulates itself.