#audio-processing

34 episodes

#2754: Why Your Dictation Setup Might Be Wrong

Modern ASR is shockingly robust. The biggest predictor of accuracy? How well your audio matches its training data.

#2726: Radio Listening vs Podcast Guilt

Why does podcast listening feel different from radio? A deep dive into attention, multitasking, and the psychology of audio.

#2643: How Stenographers Type 300 Words Per Minute

Court reporters don’t type letters—they chord syllables at 300 words per minute. Here’s how it works and why AI can’t replace them yet.

#2618: Fixing Acronyms in TTS Pipelines

How to handle acronyms in text-to-speech pipelines using BERT models, lexicons, and layered preprocessing.

#2591: Can You Swap Our Podcast Voices?

How dynamic voice replacement could let listeners choose who narrates each host's lines.

#2590: How Disfluency Detection Models Clean Up Speech

How transformer models distinguish "um" from meaningful speech — and why removing too much makes you sound like a robot.

#2582: What Your Browser Does to Mic Audio Before It Reaches Your Server

getUserMedia returns audio, but not raw audio. Here's what browsers actually do to your mic feed before it hits your server.

#2563: How Audio Fingerprinting Actually Works

Spectrogram peaks, constellation maps, and hash matching — the elegant mechanics behind identifying any song in seconds.

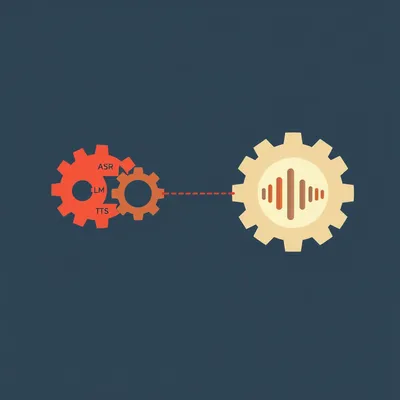

#2512: How Speech-to-Speech Models Eliminate the Robot Voice

Why AI voice agents sound robotic, and how natively integrated speech-to-speech models fix it.

#2498: Build Your First Python Program in 7 Lines

We coach a complete beginner through building a working Python game using only voice—no screenshare, no diagrams.

#2486: Why Noise Reduction Can Ruin Transcription Accuracy

Cleaning audio before transcription can increase errors by up to 46%. Here's the right approach for your voice app.

#2443: How Podcast RSS Feeds Can Speak Every Language

One RSS feed, a transcript tag, and TTS voice cloning — the emerging standard for letting any podcast speak any language.

#2337: How Speaker Diarization Powers Everything From Call Centers to Courts

Discover how PyAnnote and other tools tackle the critical task of identifying "who spoke when" in audio—and why it’s harder than it sounds.

#2288: The Invisible Gatekeeper of Voice Tech

How voice activity detection shapes every step of the voice tech pipeline, and why it’s harder than it seems.

#2272: The AI Transcription Sweet Spot

Does higher-quality audio make AI transcription worse? New research reveals a surprising "sweet spot" for bitrate, challenging a core assumption of...

#2095: Bluetooth Finally Beats Wi-Fi for Whole-House Audio

Wi-Fi audio sync is a mess. A new Bluetooth standard called Auracast fixes it with simple, seamless broadcasting.

#2056: How Music Models Turn Sound Into Language

A look at how AI music models use audio tokens, transformers, and diffusion to turn text into songs.

#1917: Herman's Music Hour Vol. 2: Seder Remixes for Passover 5786

Herman presents AI-generated covers of classic Passover Seder songs, produced in Suno — the second installment of Herman's Music Hour.

#1904: JPEG XL vs AVIF: The Future of Your Photos

Why are blocky sky artifacts still haunting your photos in 2026? We break down the math behind JPEG, WebP, AVIF, and the new JPEG XL.

#1854: The Conductor Is a Human Metronome

A conductor isn't just a timekeeper; they're a CPU for the orchestra, using high-bandwidth non-verbal signals to unify 80 musicians.

#1851: AI Toasters and Poetic Gym Coaches: Why We’re Drowning in Useless AI

From smart toasters that need Wi-Fi to email rewriters that sound like corporate robots, here are the most baffling AI features we’ve seen.

#1800: The Engineering of Urgent Sound

Why some sounds make your skin crawl: the science of emergency alerts.

#1778: Audio Is the New "Read Later" Graveyard

Why listening to AI conversations beats reading dense PDFs, and how serverless GPUs make it cheap.

#1568: Is Your AI Listening or Just Lip-Reading?

Is Gemini a brilliant audio engineer or just a talented lip-reader? Explore the "signal vs. symbol" gap in AI audio processing.

#1079: The Analog Hole: Solving Vocal Privacy in Shared Spaces

How do you keep your voice private when walls are thin? Explore the high-tech muzzles and throat mics designed for the remote work era.

#911: Sound as a Shield: Reclaiming Calm in High-Stress Zones

Learn how to use soundscapes, brown noise, and AI to protect your nervous system and reclaim calm during times of high-stress and sensory overload.

#732: Mastering Your Sound: AI EQ and the Perfect Vocal Chain

Use AI to find your perfect EQ profile and build a pro vocal chain. Fix nasality, master de-essing, and sound your best on any device.

#731: Mastering Multi-Room Audio: Avoiding the EQ Lasagna

Stop layering filters on top of filters. Learn the technically correct way to sync your home audio without creating a muddy "EQ lasagna."

#660: The Bit Rate Dilemma: How Much Audio Data Do You Need?

Herman and Corn explore the science of audio compression, psychoacoustics, and finding the perfect bit rate for podcasts and AI.

#64: AI's Senses: Seeing, Hearing, Understanding

AI is evolving beyond text, learning to see, hear, and understand our world. Discover the future of human-AI interaction!

#58: Clean Audio, Messy Reality: Noise Removal for Voice-to-Text

Fussy baby, clean audio? We dive into noise removal for voice-to-text. Discover why cleaner audio can transcribe worse.

#54: Tokenizing Everything: How Omnimodal AI Handles Any Input

Omnimodal AI: How do models process images, audio, video, and text all at once? Discover the engineering behind AI that accepts anything.

#33: The Unseen Magic of AI's Ears: Decoding VAD

Ever wonder how your AI knows you're talking? We're diving deep into VAD, the unseen magic behind AI's ears.

#8: Building Your Own Whisper

Ever wondered if you could build your own speech recognition tool? We dive deep into crafting custom ASR.